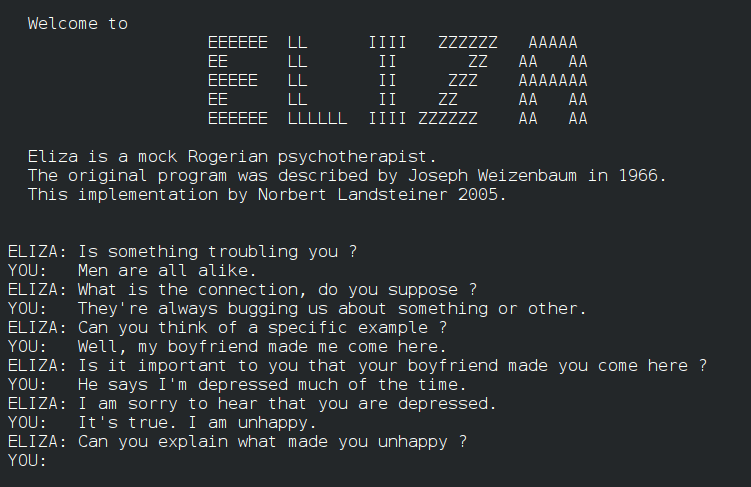

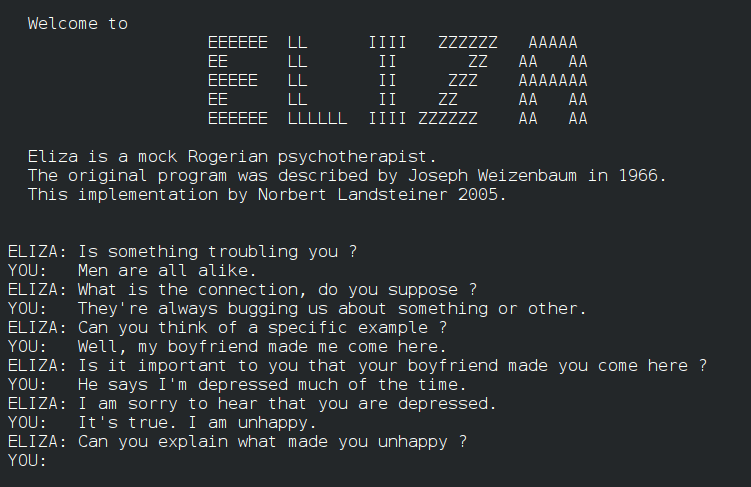

<image a conversation with eliza>

This lecture looks at Artificial Intelligence. We discuss the definition of AI, its history and AI techniques. We explore the philosophy of mind to understand the goal of AI. The development of AI contains many interesting sub-topics. There are many "branches" of AI including: automatic reasoning, formulate AI as search problems, and optimization. The sub-topics become useful tools in their own such as : evolutionary computation, image understanding. The current most popular AI is Generative AI which has strong impact on the future of human labor.

Artificial Intelligence (pptx )

Machine Learning (pptx )

Weak and Strong AI

Philosophy of mind

AI and Logic

ELIZA

Expert system

CYC

Perceptron

Optimization

Natural Language Processing

Computer Vision

===================================================

https://en.wikipedia.org/wiki/Philosophy_of_mind

from Wikipedia

" Philosophy of mind is a branch of philosophy that studies the ontology

and nature of the mind and its relationship with the body. The mind–body

problem is a paradigmatic issue in philosophy of mind, although a number

of other issues are addressed, such as the hard problem of consciousness

and the nature of particular mental states. Aspects of the mind that are

studied include mental events, mental functions, mental properties,

consciousness and its neural correlates, the ontology of the mind, the

nature of cognition and of thought, and the relationship of the mind to

the body."

Can machines think?

https://philpapers.org/browse/can-machines-think

Turing test

Chinese room problem

Cheniese room argument

https://en.wikipedia.org/wiki/Chinese_room

" The Chinese room argument holds that a digital computer executing a

program cannot have a "mind", "understanding", or

"consciousness",regardless of how intelligently or human-like the program

may make the computer behave. The argument was presented by philosopher

John Searle in his paper "Minds, Brains, and Programs", published in

Behavioral and Brain Sciences in 1980. Similar arguments were presented by

Gottfried Leibniz (1714), Anatoly Dneprov (1961), Lawrence Davis (1974)

and Ned Block (1978). Searle's version has been widely discussed in the

years since. The centerpiece of Searle's argument is a thought experiment

known as the Chinese room.

The argument is directed against the philosophical positions of

functionalism and computationalism which hold that the mind may be viewed

as an information-processing system operating on formal symbols, and that

simulation of a given mental state is sufficient for its presence.

Specifically, the argument is intended to refute a position Searle calls

the strong AI hypothesis: "The appropriately programmed computer with the

right inputs and outputs would thereby have a mind in exactly the same

sense human beings have minds."

"

ELIZA

https://en.wikipedia.org/wiki/ELIZA

<image a conversation with eliza>

Rule based

Find out about C

If B, then C (rule 1)

if A, then B (rule 2)

------------

conclusion: If A, then C

Question: Is A true? (data)

MYCIN

https://en.wikipedia.org/wiki/Mycin

Textbook (one chapter)

https://people.dbmi.columbia.edu/~ehs7001/Buchanan-Shortliffe-1984/Chapter-01.pdf

from wikipedia

" MYCIN was an early backward chaining expert system that used artificial

intelligence to identify bacteria causing severe infections, such as

bacteremia and meningitis, and to recommend antibiotics, with the dosage

adjusted for patient's body weight — the name derived from the antibiotics

themselves, as many antibiotics have the suffix "-mycin". The Mycin system

was also used for the diagnosis of blood clotting diseases. MYCIN was

developed over five or six years in the early 1970s at Stanford

University. It was written in Lisp as the doctoral dissertation of Edward

Shortliffe under the direction of Bruce G. Buchanan, Stanley N. Cohen and

others."

EMYCIN

https://link.springer.com/chapter/10.1007/978-3-642-96868-6_66

a book written by Marvin Minsky (one of "the father of AI") "Preceptrons 1969"

https://en.wikipedia.org/wiki/Perceptrons_(book)

Definition

https://en.wikipedia.org/wiki/Perceptron

" In machine learning, the perceptron (or McCulloch-Pitts neuron) is an

algorithm for supervised learning of binary classifiers. A binary

classifier is a function which can decide whether or not an input,

represented by a vector of numbers, belongs to some specific class. It is

a type of linear classifier, i.e. a classification algorithm that makes

its predictions based on a linear predictor function combining a set of

weights with the feature vector. "

https://en.wikipedia.org/wiki/Cyc

" Cyc is a long-term artificial intelligence project that aims to

assemble a comprehensive ontology and knowledge base that spans the basic

concepts and rules about how the world works. Hoping to capture common

sense knowledge, Cyc focuses on implicit knowledge that other AI platforms

may take for granted. This is contrasted with facts one might find

somewhere on the internet or retrieve via a search engine or Wikipedia.

Cyc enables semantic reasoners to perform human-like reasoning and be less

"brittle" when confronted with novel situations.

Douglas Lenat began the project in July 1984 at MCC, where he was

Principal Scientist 1984–1994, and then, since January 1995, has been

under active development by the Cycorp company, where he was the CEO."

simple description

https://techsauce.co/saucy-thoughts/what-is-generative-ai-and-how-it-changing-possibility

https://www.mckinsey.com/featured-insights/mckinsey-explainers/what-is-generative-ai

https://en.wikipedia.org/wiki/Generative_adversarial_network

from Wikipedia

" A generative adversarial network (GAN) is a class of machine learning

framework and a prominent framework for approaching generative AI. The

concept was initially developed by Ian Goodfellow and his colleagues in

June 2014. In a GAN, two neural networks contest with each other in the

form of a zero-sum game, where one agent's gain is another agent's loss.

Given a training set, this technique learns to generate new data with the

same statistics as the training set. For example, a GAN trained on

photographs can generate new photographs that look at least superficially

authentic to human observers, having many realistic characteristics.

Though originally proposed as a form of generative model for unsupervised

learning, GANs have also proved useful for semi-supervised learning, fully

supervised learning, and reinforcement learning. "

Key concept: Generate more data from existing data

contact me at: prabhas dot c at chula dot ac dot th

last update 6 Nov 2023